Tesla AI5 Chip Leaked: Near H100 Performance, 9-Month Dev Cycle

“It will be one of the highest-volume AI chips ever produced.”

Elon Musk recently announced on X (formerly Twitter): “Congratulations to the @Tesla_AI chip design team — AI5 tape-out is complete! AI6, Dojo3, and other exciting chips are in development.”

The term “tape-out” — a legacy phrase from the era of shipping physical magnetic tapes with chip designs — signifies successful completion of the final design stage and entry into trial manufacturing.

Performance Benchmarks & Architecture

Compared to Tesla’s prior-generation AI4 (2023, 500+ TOPS), AI5 delivers ~5× higher performance per chip — translating to an ~8× uplift in total compute, 9× increase in memory capacity, and 5× boost in memory bandwidth.

- System-level throughput: 2000–2500 TOPS (vs. AI4’s 300–500 TOPS)

- Single-chip capability: Roughly matches NVIDIA’s Hopper H100

- Dual-AI5 configuration: Approaches Blackwell B200-class performance

- Power envelope: ~150W (edge-optimized); scalable higher for data centers

- Estimated transistor count: 108–125 billion (TSMC 3nm process)

- Memory subsystem: 12× SK hynix H58G66DK9QX170N LPDDR5X modules → 96GB @ 1.15 TB/s bandwidth

- Die size: ~430 mm² (half-reticle), enhancing yield and cost efficiency vs. full-reticle chips like H100 (<800 mm²)

- Estimated cost: ~10% of H100’s price point

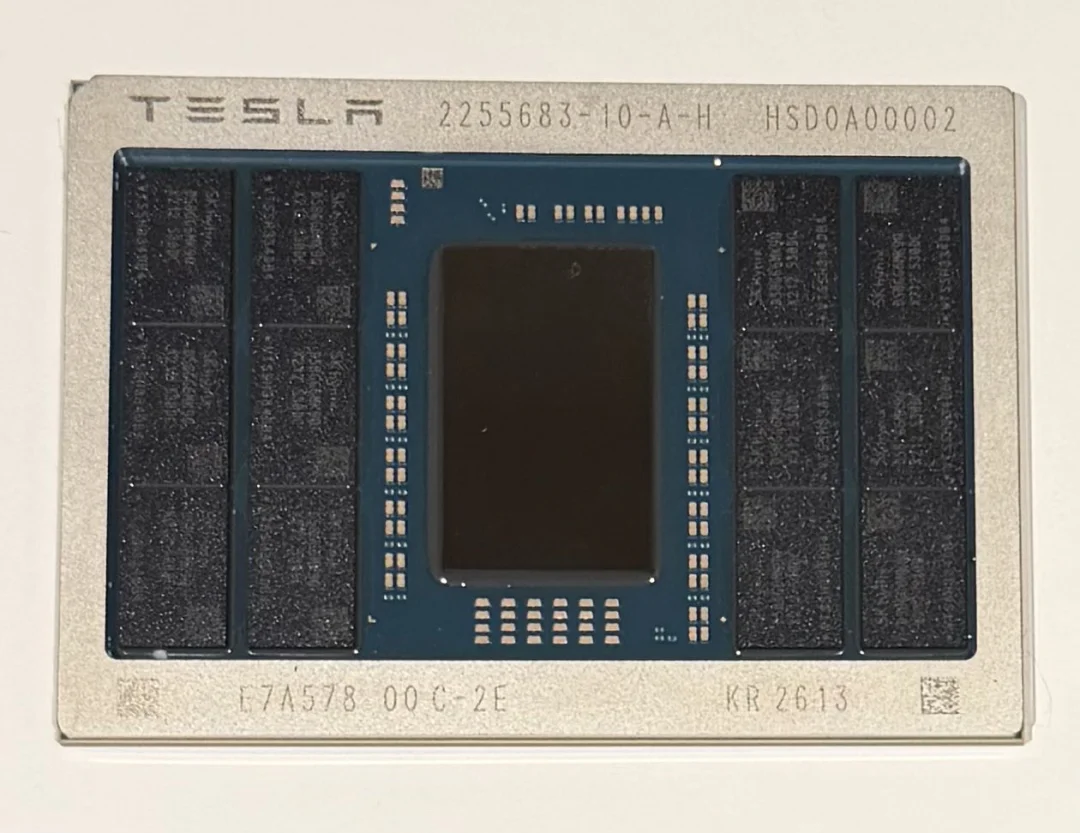

Packaging Innovation

AI5 adopts advanced in-package memory integration, drastically reducing latency versus traditional board-mounted DRAM. While the pictured version appears optimized for data-center workloads, automotive and Optimus variants are expected to use smaller, cost-optimized configurations (e.g., 32GB onboard).

Target Deployment Scenarios

| Use Case | Key Requirements | Status |

|---|---|---|

| Tesla Full Self-Driving (FSD) | High real-time inference, safety redundancy, low-latency sensor fusion | Targeting 2027 mass deployment; HW3/HW4 remain software-upgradable for now |

| Optimus Robot Platform | Real-time vision + force/torque feedback processing, low-power edge inference | Direct architectural alignment due to shared FSD software stack |

| xAI Data Centers | Hybrid training/inference support, distributed workload orchestration | Secondary use case — AI5 is primarily edge-optimized but adaptable |

Design Philosophy: Vertical Integration Over Generality

Unlike NVIDIA’s general-purpose GPU strategy — where silicon serves arbitrary models via CUDA — AI5 is purpose-built for one workload: running Tesla’s differentiable physics engine trained on 9 billion miles of real-world driving data.

Key optimizations include:

- ✅ Dedicated softmax accelerators, eliminating redundant silicon area and power waste common across generic GPUs

- ✅ Custom quantization pipelines, minimizing precision loss during inference without sacrificing speed

- ✅ Hardware-software co-design loop, enabling rapid iteration — e.g., the upcoming AI6 is slated for tape-out by December 2026 (just 9 months after AI5)

As independent researcher Shanaka Anslem Perera noted: “9 billion miles of driving data have been distilled into a single chip.”

Competitive Positioning: Not a Head-to-Head Rival

| Metric | NVIDIA H100 / B200 | Tesla AI5 |

|---|---|---|

| Peak Compute | 4.5 petaFLOPS (B200) | ~2.5 petaOPS (INT8, FSD-optimized) |

| Typical Power | Up to 1000W | ~150W (auto/robot), scalable |

| Use Flexibility | Universal model support | FSD/physics-engine-locked |

| Energy Efficiency | Baseline | 3–5× higher for target workloads |

| Cost Efficiency | Premium pricing | Estimated ~10× better value for Tesla’s stack |

This isn’t a chip designed to compete with NVIDIA — it’s engineered to eliminate NVIDIA as a dependency.

Compatibility with Existing Hardware?

A major community question: Can AI5 replace HW3 or HW4 units?

Musk responded directly: “HW4 is already sufficient for unsupervised FSD.”

Industry analysts and Reddit consensus suggest:

- ❌ No backward-compatible retrofitting planned for HW3 vehicles

- ❌ HW4/AI4 systems will continue receiving software enhancements

- ✅ New vehicle platforms (e.g., next-gen Cybertruck, RoboTaxi fleet, Optimus Gen2) will ship with AI5 natively

- 💡 Upgrade path likely involves hardware swaps (HW5) — not drop-in replacements

Why Build In-House Chips? Strategic Imperative

In a January 2026 livestream, Musk stated: “Global chip manufacturing capacity meets only ~2% of our projected demand.”

Relying on external suppliers creates bottlenecks in AI development velocity. With AI5, Tesla achieves:

- ⚡ 9-month iteration cycles, unshackled from foundry lead times and third-party roadmaps

- 🛡️ Full stack control, from silicon layout to neural compiler optimization

- 💰 Radical cost reduction, accelerating ROI on AI infrastructure at scale

Source: X Post | Original article by Lin Xin, 51CTO Tech Stack