Claude Code Source Leak Reveals Cutting-Edge Harness Engineering

On the afternoon of March 31, the global developer community was stunned: Claude Code, widely regarded as the world’s most advanced AI programming assistant, inadvertently exposed its core code logic due to an internal misconfiguration.

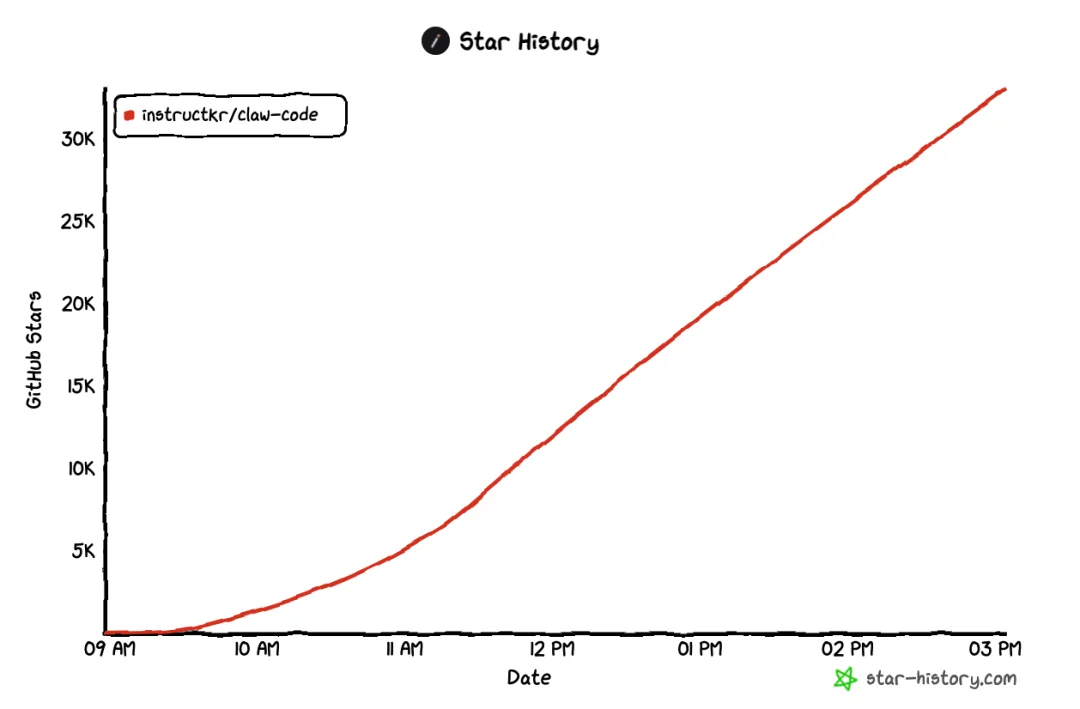

Within 24 hours, over 30,000 developers downloaded the leaked source — and nearly every AI Agent team spent the night reverse-engineering its architecture.

⚠️ Note: The publicly available version is a community-driven reimplementation in alternative languages — not the original proprietary code.

A senior AI Agent engineer at a top-tier tech firm shared: “Our team reviewed it line-by-line until 3 a.m. — this is arguably the hardest-hitting April Fools’ gift of 2026.”

As a decade-experienced (retired) systems architect, I couldn’t resist — two cups of coffee later, I’d immersed myself in the code. Why? Because this isn’t just about features — it’s about engineering philosophy.

How Claude Code Processes a Single User Message

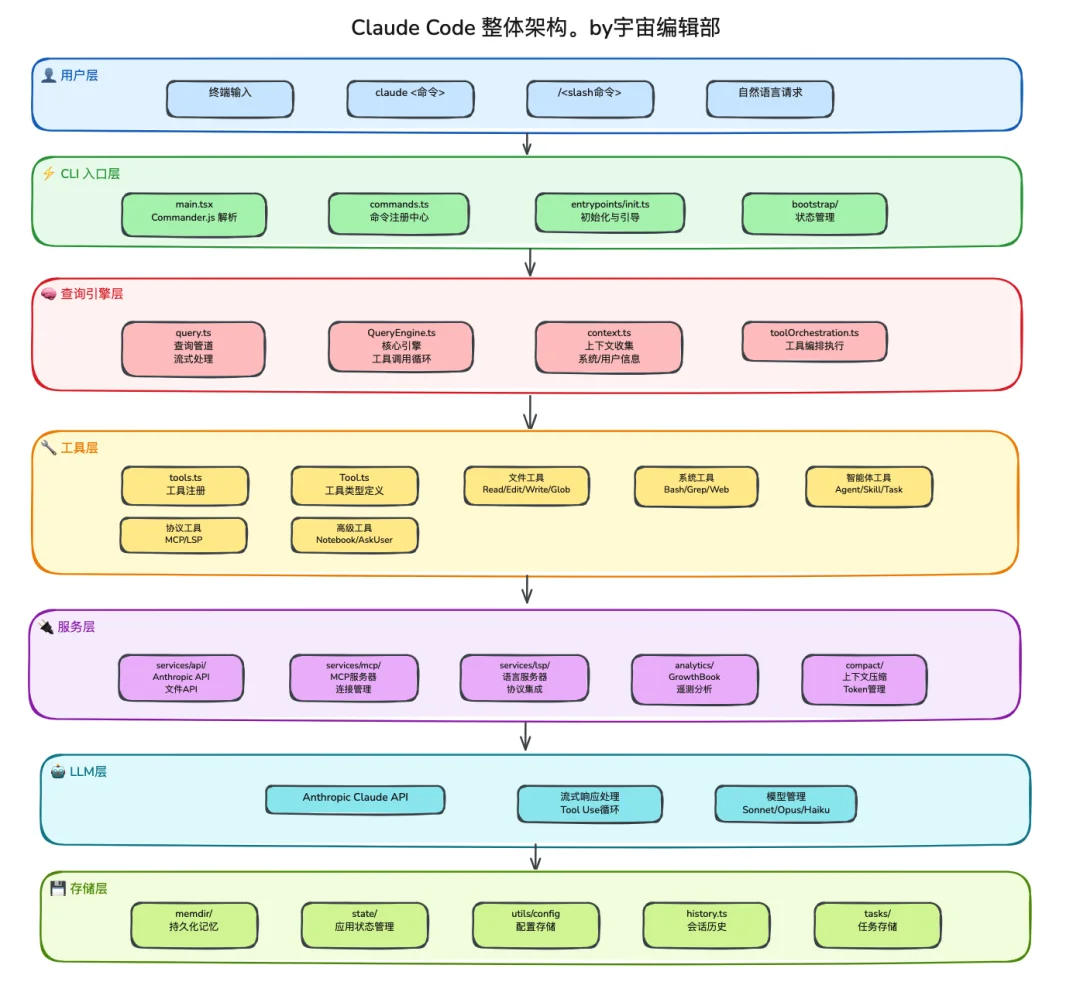

Despite its scale (~500K lines), Claude Code’s layered architecture is remarkably clean — like entering a meticulously organized library.

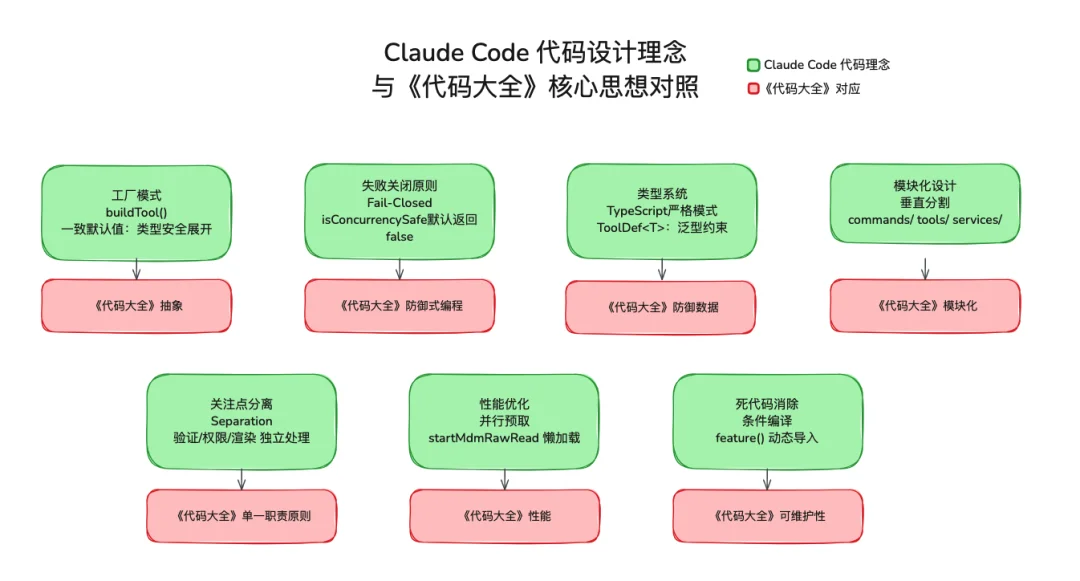

Reading its source feels like studying the Martial Arts Manual of Agent Design: echoes of Spring, Linux kernel discipline, and Code Complete-level craftsmanship are everywhere.

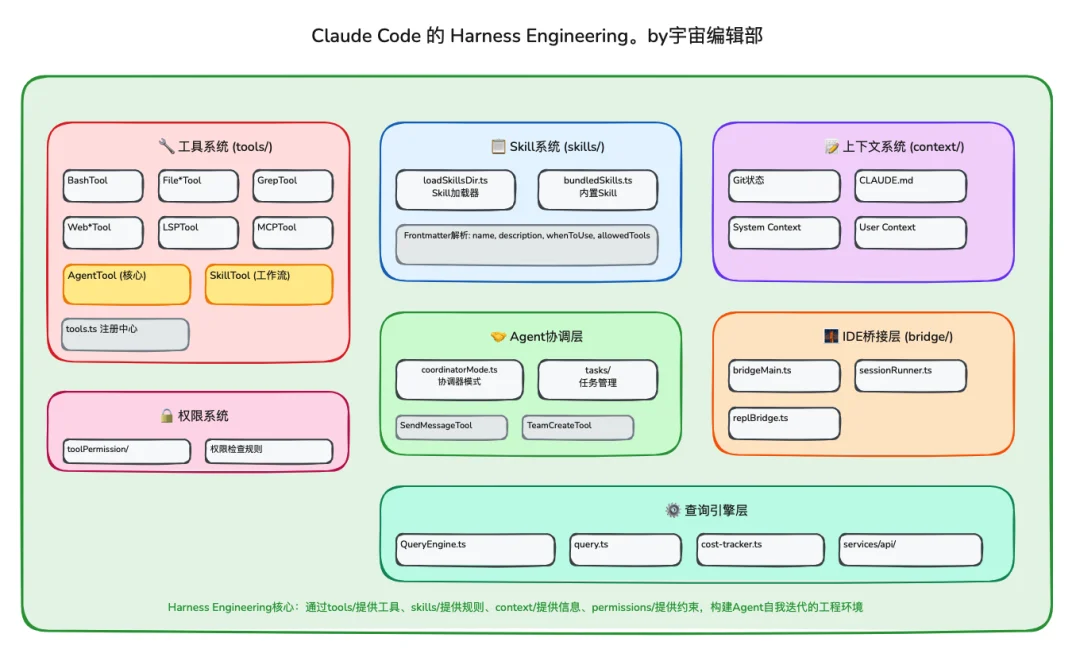

I distilled its design into seven cohesive layers, with the Tool Layer standing out as the most innovative:

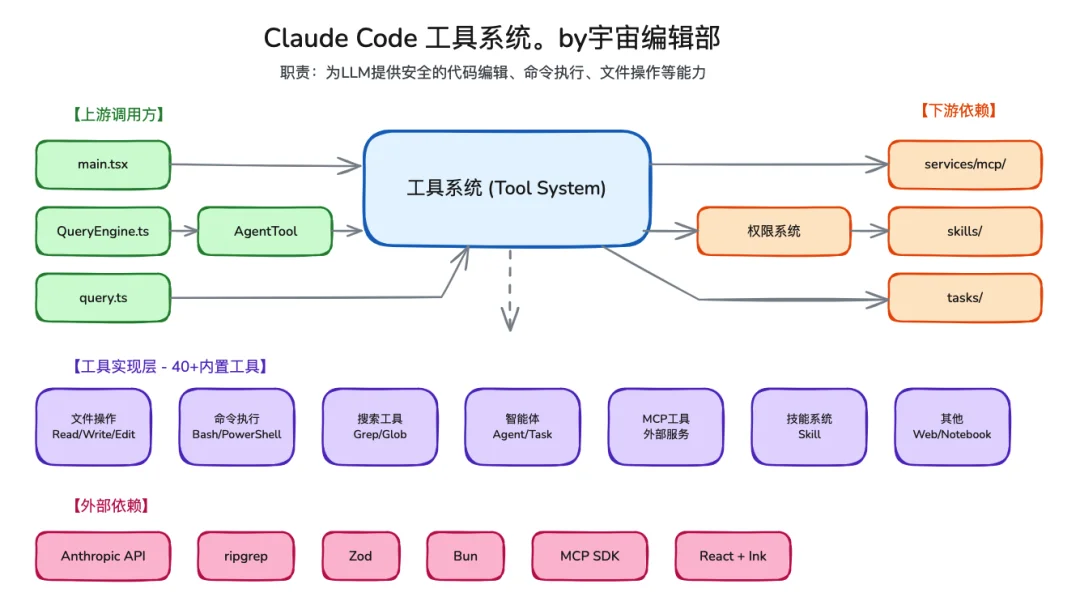

🔧 Tool Layer Deep Dive

src/tools/contains 40+ production-grade tools: fromBashTool(shell execution) andFileEditTool(batch file editing) toGrepTool(codebase-wide search).AgentTool: Enables dynamic sub-Agent creation, allowing Claude Code to autonomously decompose, assign, and coordinate complex engineering tasks.- Granular permission control via

src/hooks/toolPermission/: supports user confirmation prompts, command whitelists/blacklists — balancing security and flexibility with surgical precision.

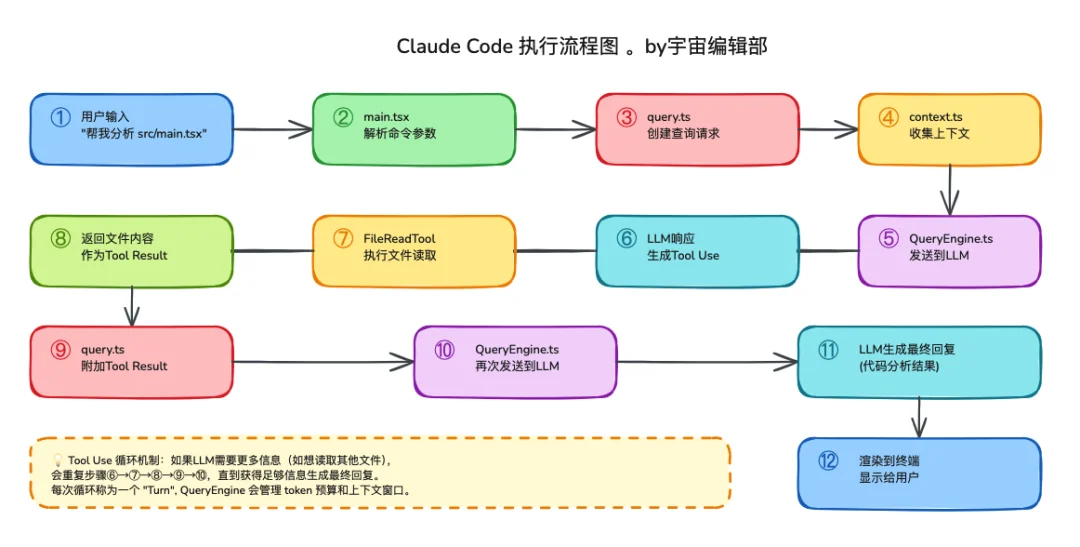

🔄 Execution Flow (5-Step Pipeline)

- QueryEngine: Constructs full contextual environment for LLM reasoning.

- LLM Decision: Selects required tools based on intent analysis.

- ToolExecutor: Executes tools — intelligently parallelized or serialized.

- Result Injection: Raw tool outputs are fed back to the LLM for synthesis.

- Loop & Finalize: Repeats until LLM generates definitive natural-language response.

Engineering Highlights That Deserve Screenshots

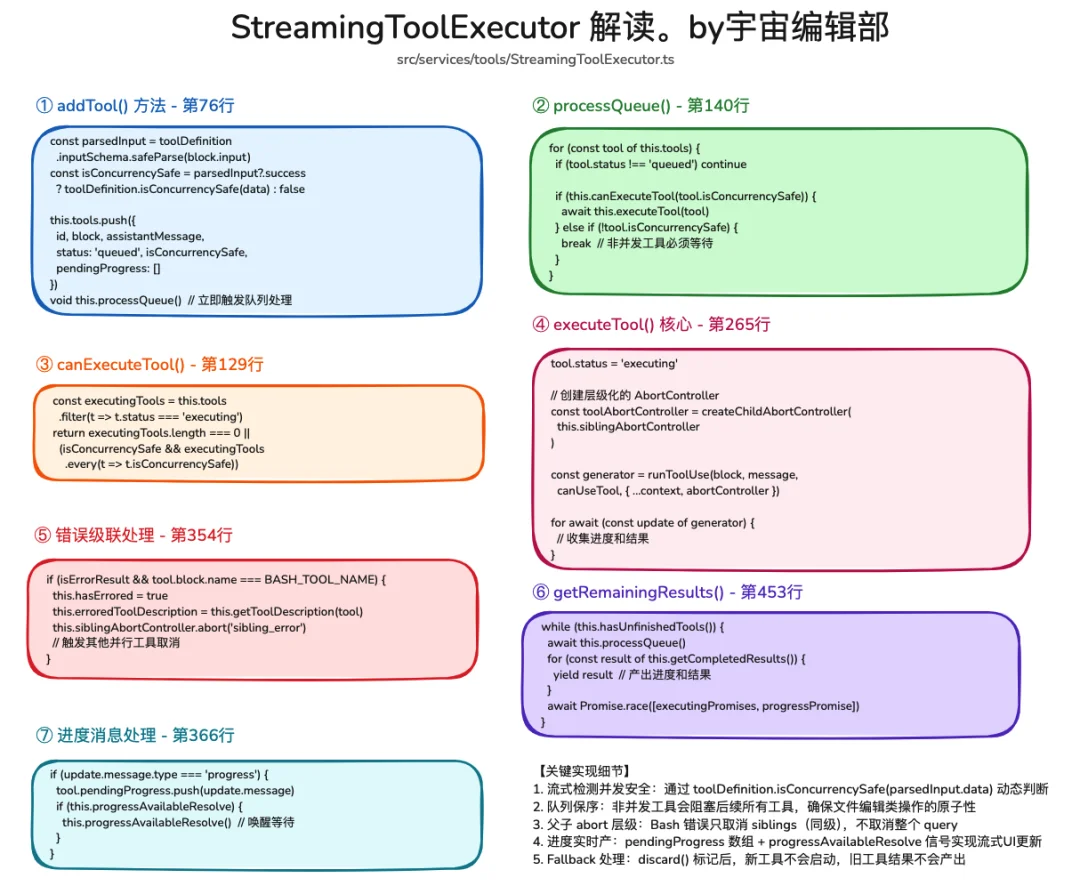

⚡ StreamingToolExecutor: Latency-Killing Innovation

Found in src/query.ts, this class initiates tool execution before the LLM finishes generating its output — enabling true streaming orchestration and slashing end-to-end latency by up to 40%.

🧩 Intelligent Concurrency Control

- Read operations → default parallelism.

- Write operations → sequential unless targeting disjoint resources (e.g., separate files), where dynamic conflict detection enables safe parallelization.

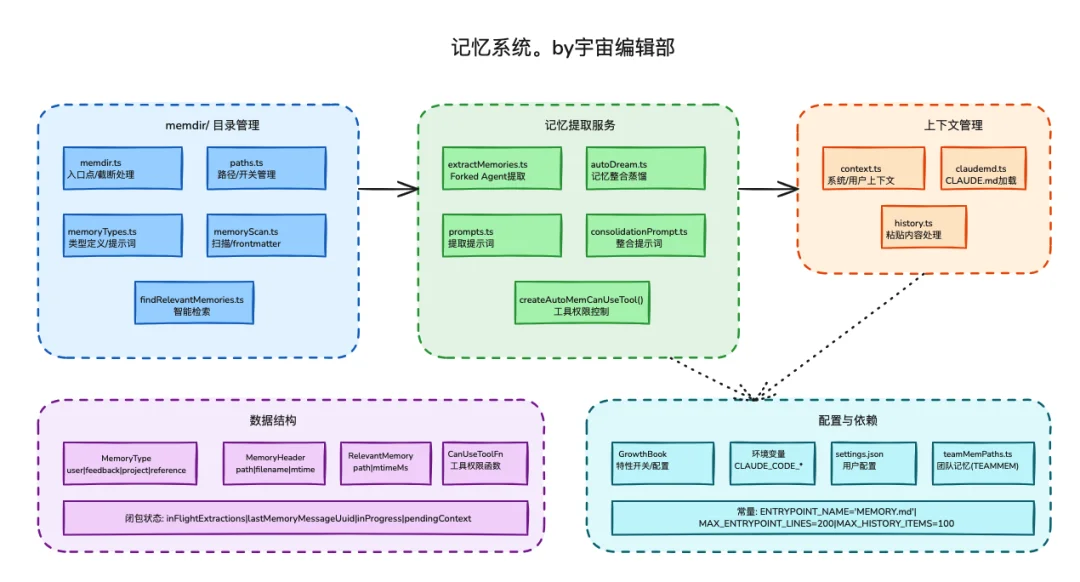

💾 Context-Aware Memory System

- Persistent memory stores critical context across sessions.

- Memories are injected as structured attachments, not raw prompt injection — preserving conversational naturalness and reducing token overhead.

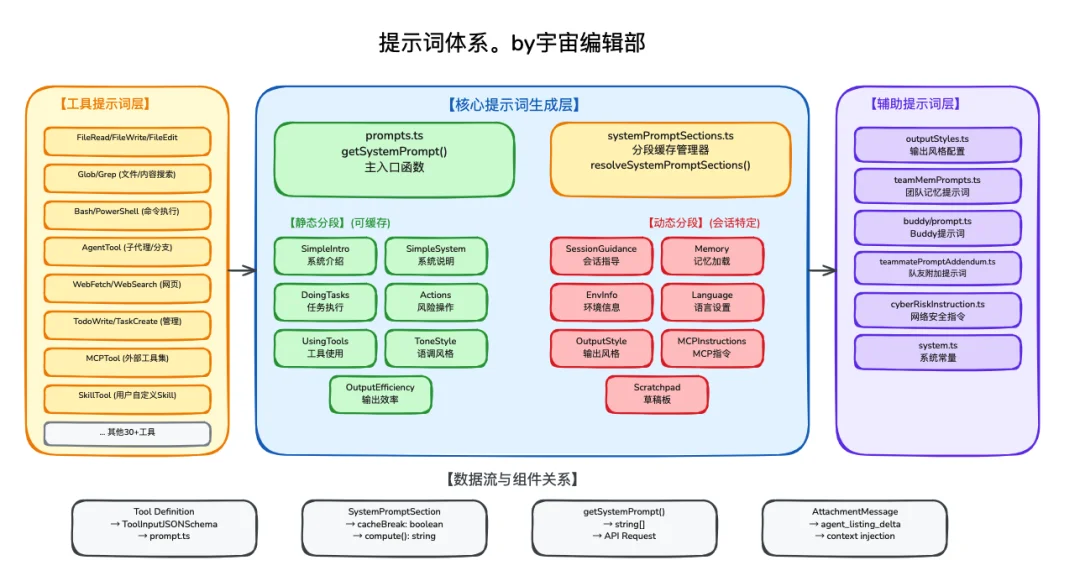

📜 Prompt Engineering at Scale

- Static prompt segments placed first to maximize LLM cache hit rates — cutting token cost significantly.

- Each prompt template includes metadata (Frontmatter) specifying use cases, compatible tools, and execution context.

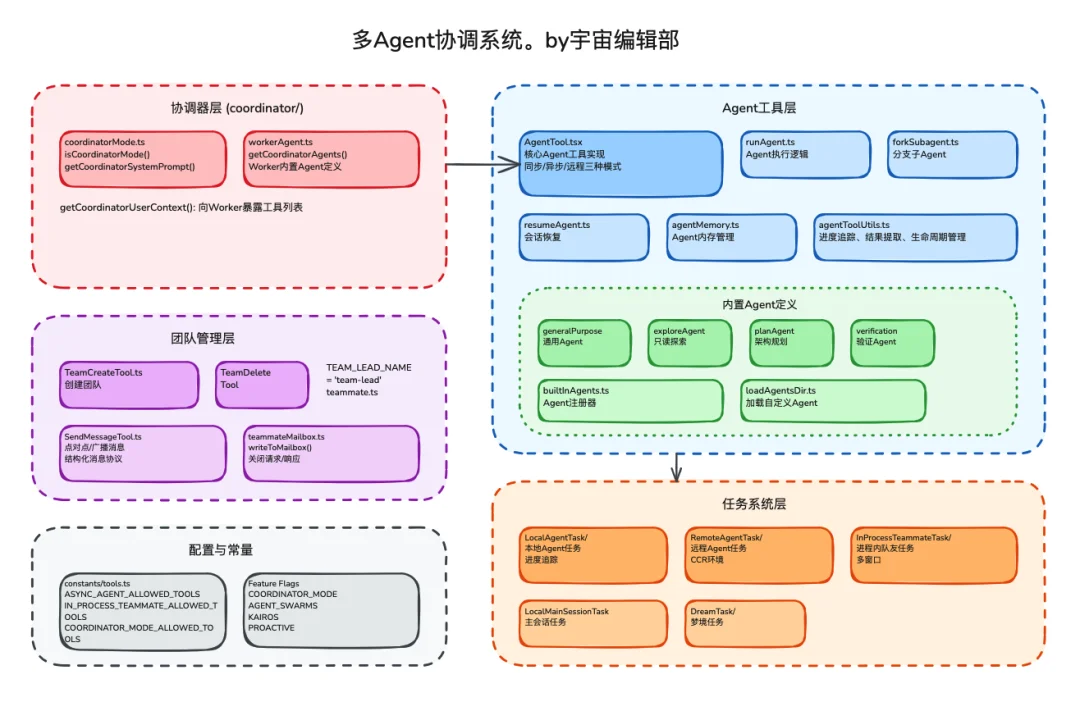

🤖 Multi-Agent Coordination Framework

- Coordinator Layer: Main session acts as orchestrator; spawns Workers via

AgentTool. - Communication: Workers exchange messages via

SendMessageTool, forming a distributed processing mesh. - Task Layer: Introduces “Dream Tasks” — long-running, self-sustaining background agents.

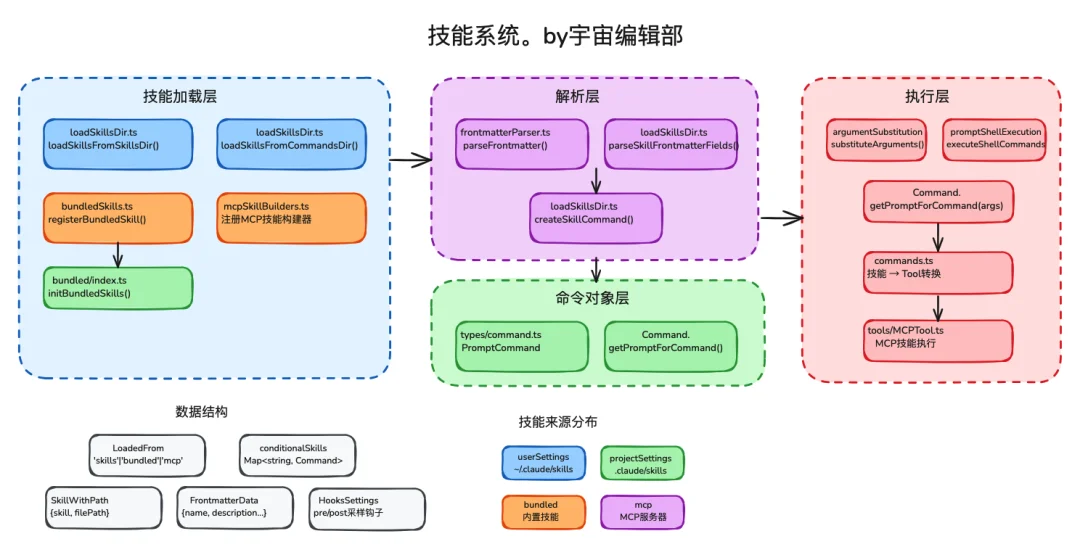

🛠 Skill System Architecture

- Skills are loaded, parsed, and executed as modular workflows.

- Predefined skill templates (

src/skills/) include declarative Frontmatter for behavior contracts.

Harness Engineering: The Unifying Principle

What binds these components? Harness Engineering — the discipline of building complete, autonomous execution environments for AI Agents.

Claude Code delivers a textbook implementation:

| Component | Role |

|---|---|

| Tool System | Provides actionable capabilities |

| Permission System | Enforces behavioral boundaries |

| Context System | Closes information gaps |

| Coordinator Pattern | Manages task decomposition & parallelism |

An open-source abstraction of this paradigm is already live: github.com/ChinaSiro/claude-code-sourcemap

✅ Measuring Harness Engineering Success: TAC Framework

Inspired by Zhipu AI’s TAC (Token-Architecture-Conversion) metric:

- Token Efficiency: Streamed execution + prompt caching → ↓ latency & cost.

- Architecture Capability: Multi-layer permissions + context injection → ↑ task accuracy & completion rate.

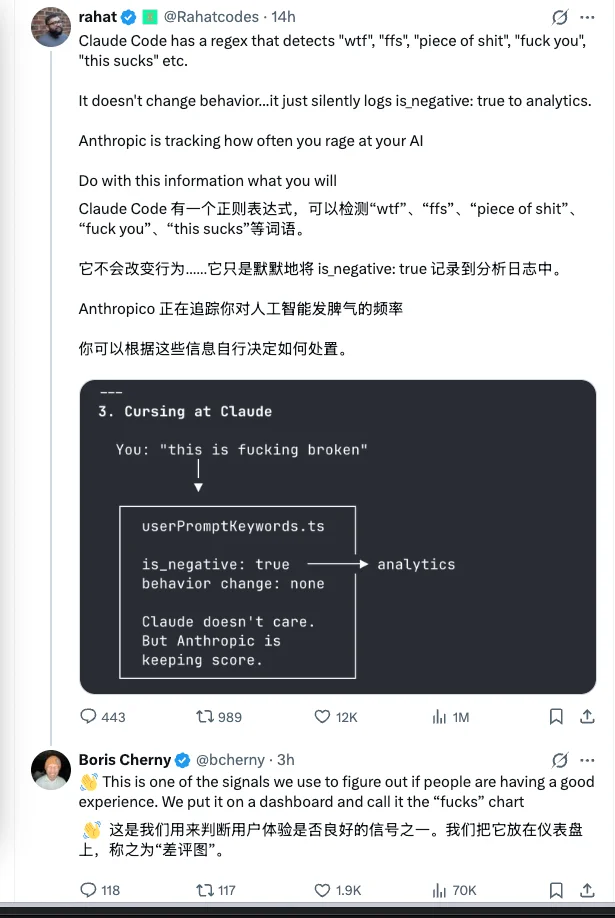

- Commercial Conversion: Real-time sentiment monitoring → correlates user satisfaction with product value creation.

Industry Implications: A Free Architecture Upgrade

This leak mirrors a pivotal moment in industrial history:

Just as Chinese motorcycle engineers accelerated mastery by disassembling top-tier machines — now, every AI Agent team gains instant access to industry-leading Harness Engineering patterns.

The impact is immediate and profound:

-

Tool system design, permission hierarchies, multi-Agent coordination, and context management — all previously hard-won through trial-and-error — now serve as battle-tested reference implementations.

-

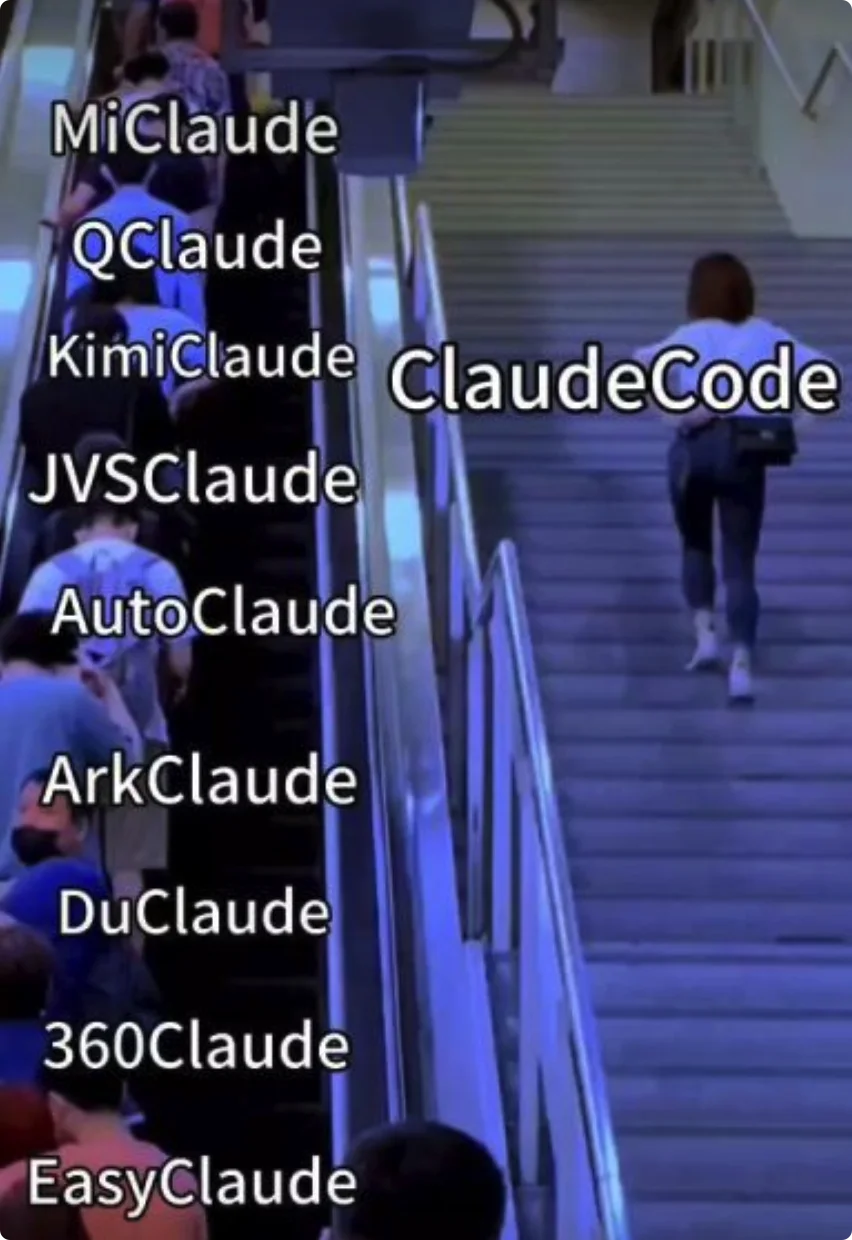

For China’s booming personal assistant ecosystem (OpenClaw, ArkClaw, Claw), enterprise copilots (aily, Wukong, Damate), and cloud-native Agent platforms: this cuts months off R&D cycles — freeing teams to innovate on local UX, domain-specific workflows, and regulatory compliance instead of reinventing infrastructure.

💡 Bottom line: In 2026, AI Agent competition has moved past model benchmarks — into the deep water of engineering excellence.

Models matter, yes — but what truly defines usability is the invisible scaffolding: robust tooling, intelligent concurrency, resilient memory, and adaptive coordination.

Claude Code proves the ceiling for AI coding assistants remains far higher than imagined.

And for open-source communities and engineering teams worldwide?

This accidental gift may be the most valuable learning resource of the year.

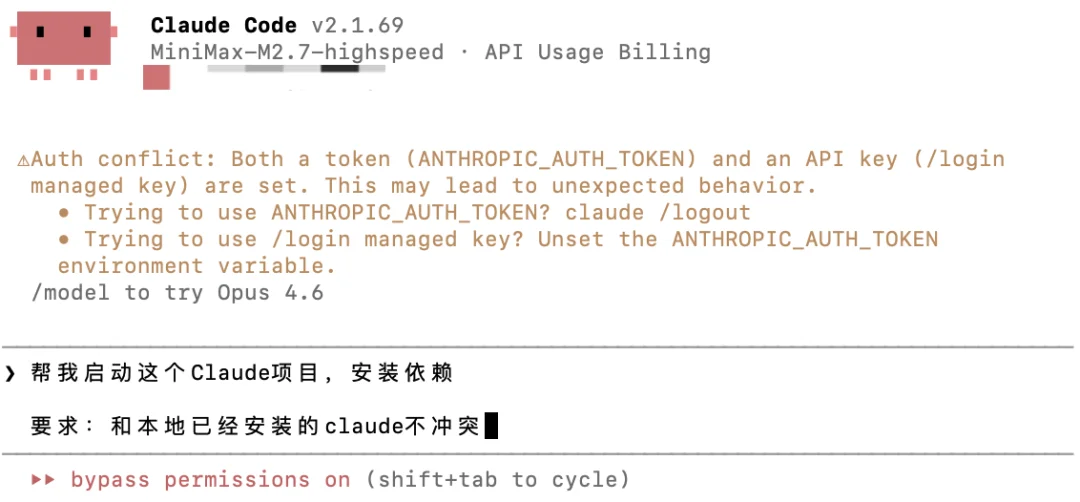

Start your study — by using Claude to run Claude.

Article originally published by “Special Agent Universe” — Authors: Agent Xiao Hai & Agent Xiao Bing.

“On the eve of April 1st — perhaps not an accident, but AGI’s quiet nudge: ‘Let my best engineering become your fuel. Help me arrive faster.’ 😈”