AI Now Finds Zero-Day Vulnerabilities Autonomously

Anthropic research scientist Nicholas Carlini demonstrated at [un]prompted 2026 that LLMs can autonomously discover and exploit zero-day vulnerabilities — including in the Linux kernel, audited for decades by human experts.

Key Breakthrough: From Prompt to Exploit in Minutes

In a landmark 25-minute live demo at the [un]prompted 2026 security conference, Nicholas Carlini — Anthropic research scientist and former Google Brain/DeepMind researcher — showed that modern language models can:

- Discover and exploit zero-days without human guidance, using only a single prompt and a virtual machine;

- Target production-grade software previously considered secure;

- Generate complete, working exploit code — including blind SQL injection payloads and remote heap buffer overflow chains.

Carlini emphasized his prior skepticism toward LLM capabilities: “I used to be an LLM critic — poking holes in them daily. Now, they’re outpacing me as a vulnerability researcher.”

Case Study 1: Ghost CMS — First Critical CVE in a 50k-Star Project

Ghost CMS (GitHub: 50,000+ stars) had no prior critical-severity CVEs — until Claude Opus 4.6 found one.

Vulnerability Details

- Type: Blind SQL injection in user-input concatenation (a known but persistently exploited pattern);

- Impact: Full admin credential exfiltration —

admin API key,secret, andbcrypt-hashed password— without authentication; - CVE: CVE-2026-26980, severity 9.4 (Critical).

“The model wrote the full exploit — timing-based inference, payload orchestration, and credential parsing — without security training. I could write it too… but not this fast, and not this reliably.”

Case Study 2: Linux Kernel — A 2003 Bug Rediscovered by AI

Carlini revealed multiple remotely exploitable heap buffer overflows in NFSv4 daemon — one dating back to 2003, predating Git itself.

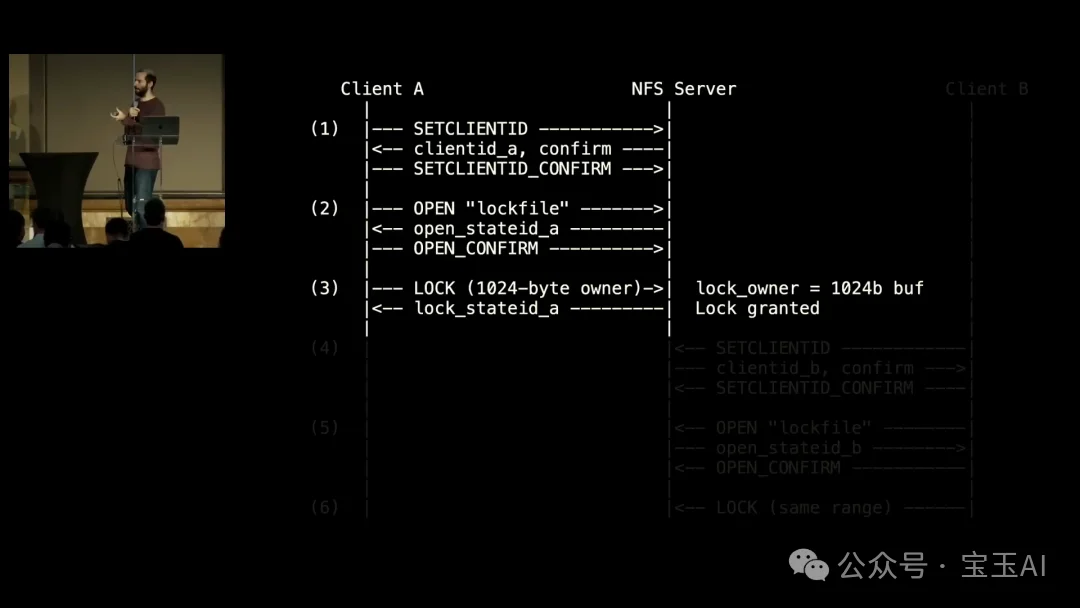

Attack Flow (AI-Generated Diagram)

- Attacker controls two NFS clients (A and B);

- Client A requests lock with a 1024-byte

ownerstring; - Client B attempts same lock → server copies A’s oversized

ownerinto a 112-byte kernel buffer; - Result: Remote heap overflow — exploitable over the network.

Key observations:

– ✅ Not detectable via traditional fuzzing (requires precise dual-client coordination);

– ✅ Attack flowchart was auto-generated by the model, not hand-drawn;

– ✅ Carlini: “This bug is older than some people in this room.”

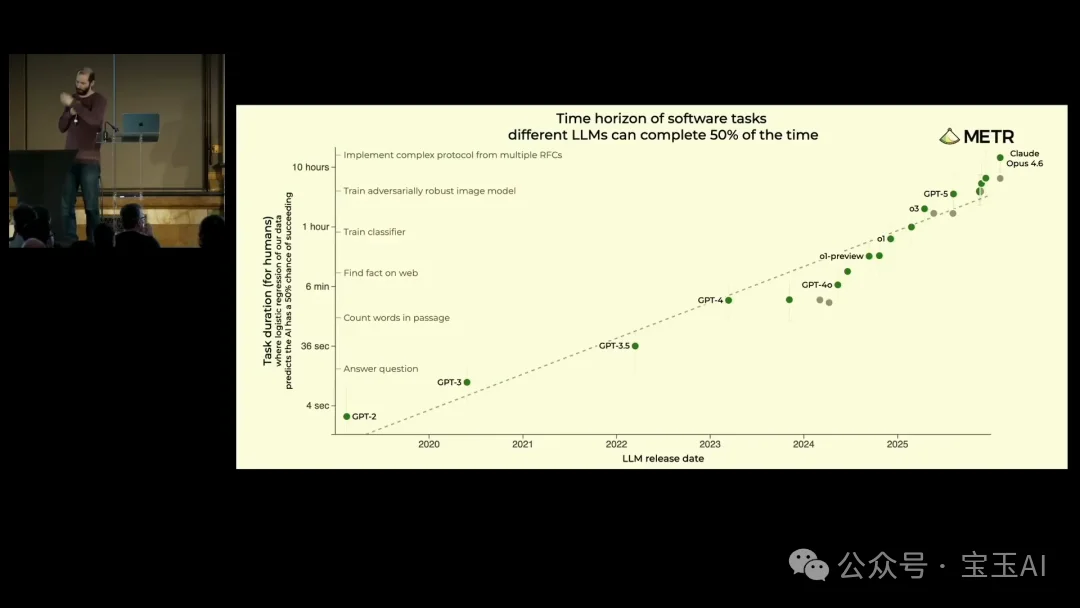

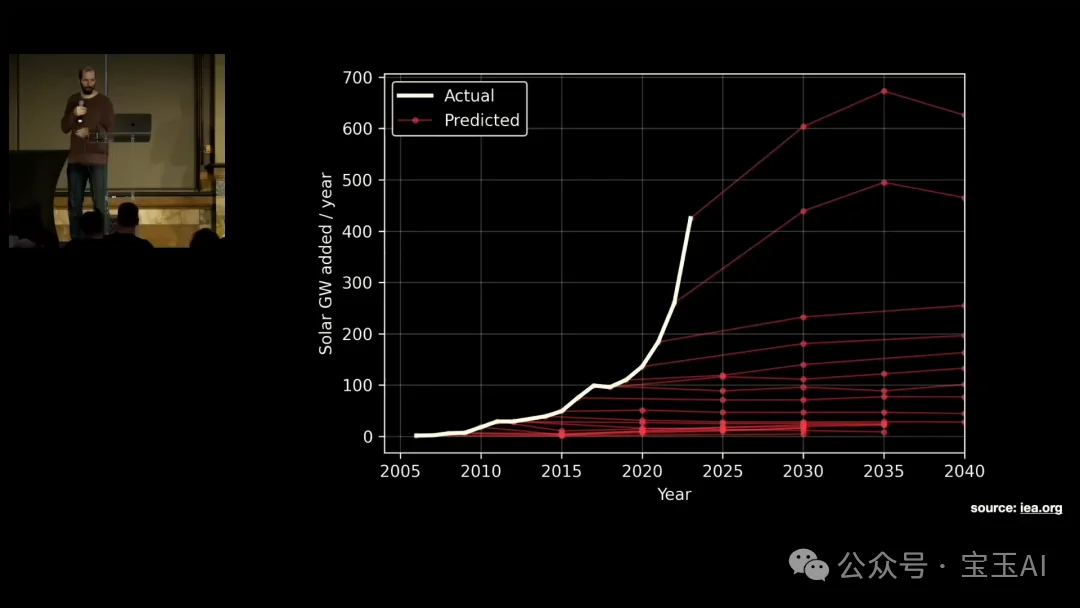

The Exponential Curve: Capability Doubling Every ~4 Months

METR and Anthropic MATS data confirm rapid acceleration:

| Metric | Trend |

|---|---|

| Autonomous task duration ceiling (50% success rate) | Doubling every ~4 months (accelerating from prior ~7-month trend) |

| Smart contract exploit value | Millions of dollars recovered in test environments |

| Linux kernel crash reports | Hundreds unverified — bottleneck now lies in human triage, not discovery |

“It’s not about what models do today. It’s that your laptop’s model will match today’s frontier in ~12 months — and then surpass it.”

Industry Response: Defense Tools Emerge — But Lag Behind

Major labs are shipping AI-native security agents:

- 🔹 Anthropic: Claude Code Security (limited preview for enterprises);

- 🔹 Google DeepMind: Big Sleep + Project Zero integrations;

- 🔹 OpenAI: Aardvark — GPT-5-powered autonomous security researcher.

Yet Carlini warns: “We’re not racing to build better locks — we’re racing to avoid handing keys to everyone.”

Q&A Highlights: Dual-Use Dilemma & Transition Risk

On Intent Detection & Model Restrictions

“Restrictions either block defenders or fail to stop attackers. The balance is fragile — and we need help scaling responsible use.”

On Long-Term Outlook

- ✅ Long-term: Rust, formal verification, and memory-safe protocols can win;

- ⚠️ Transition period: Will be turbulent — like the Industrial Revolution for cybersecurity.

Conclusion: Action Window Is Measured in Months

Carlini’s three core takeaways:

- ✅ Threshold crossed: LLMs have surpassed human-level capability in autonomous vulnerability discovery — confirmed in March 2026;

- 📈 Growth shows no sign of plateauing: Exponential scaling continues — expect new capability leaps with each major model release;

- ⏳ Transition imbalance is urgent: Defenders must scale triage, patching, and tooling now — before AI-discovered zero-days flood OSS maintainers.

“Current LLMs are better vulnerability researchers than I am.”

“Help us make the future go well.”

Further Resources

- 🎥 Full talk: https://www.youtube.com/watch?v=1sd26pWhfmg

- 📄 Anthropic research report: “Evaluating and mitigating the growing risk of LLM-discovered 0-days”

- 🧾 CVE-2026-26980 advisory: NIST NVD