OpenAI Unveils Pentagon Pact with Three Red Lines

Published: March 1, 2026 | Views: 10,060

Yesterday, we reported on OpenAI’s abrupt reversal — dubbed “light-speed capitulation” — signing a major defense contract with the U.S. Department of Defense (DoD), following Anthropic’s public refusal to grant full access to its AI systems. Today, OpenAI released key details of its agreement with the Pentagon, asserting that the contract enforces strict safeguards to prevent misuse of its models — specifically banning large-scale domestic surveillance, autonomous weapons, and high-risk automated decision-making. According to OpenAI, these protections are fundamentally different — and significantly stronger — than those in Anthropic’s prior proposal.

Context: The Anthropic Standoff

The backdrop to this development is Anthropic’s principled stance against DoD demands for unrestricted system access. Reports indicate that Anthropic’s founders clashed with Pentagon leadership over sovereignty of AI safety controls — ultimately resulting in Anthropic being labeled a “supply chain security risk” under the Trump administration.

That move triggered widespread support across Silicon Valley — even from OpenAI, which publicly backed Anthropic’s position.

Then came the pivot: Sam Altman posted three consecutive statements announcing OpenAI’s agreement to deploy its models on classified DoD networks — igniting intense scrutiny and reputational backlash.

OpenAI’s “Three Red Lines” Framework

In response to mounting criticism, OpenAI issued a comprehensive public statement early today outlining enforceable boundaries built into the contract:

🔹 OpenAI: Our Three Red Lines

- No large-scale domestic surveillance of U.S. citizens;

- No use in command-and-control of autonomous weapons systems;

- No deployment in high-risk automated decision infrastructure, such as social credit-style frameworks.

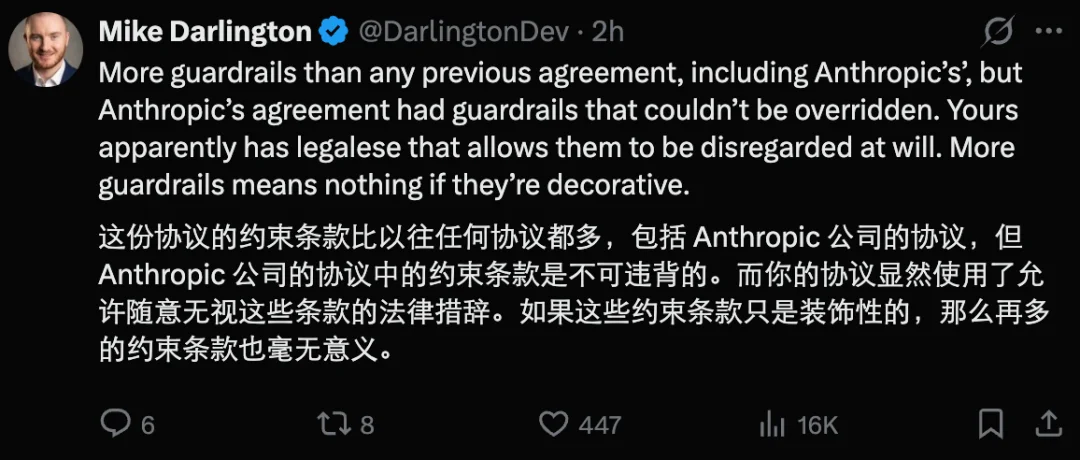

Unlike other AI labs — which reportedly weakened or eliminated technical guardrails in favor of policy-based usage restrictions — OpenAI asserts its approach combines technical enforcement, architectural control, and contractual binding.

✅ Enforcement Mechanisms

| Layer | Description |

|---|---|

| Cloud-Only Deployment | All models run exclusively in OpenAI-managed cloud environments — no edge deployment, eliminating feasibility for real-time lethal autonomy. |

| Full Control Over Safety Stack | OpenAI retains sole authority over safety classification systems, model updates, and alignment monitoring — with continuous oversight by cleared personnel. |

| Legally Binding Contract Clauses | Explicit prohibitions tied to U.S. law (e.g., DoD Directive 3000.09, Fourth Amendment, Posse Comitatus Act) — violations trigger automatic termination rights. |

📜 Core Contractual Provisions

1. Deployment Architecture

- Pure-cloud execution only; no on-premise or embedded model distribution.

- Zero release of unguarded or pre-alignment models.

- Real-time red-line compliance verification via OpenAI-operated classifiers.

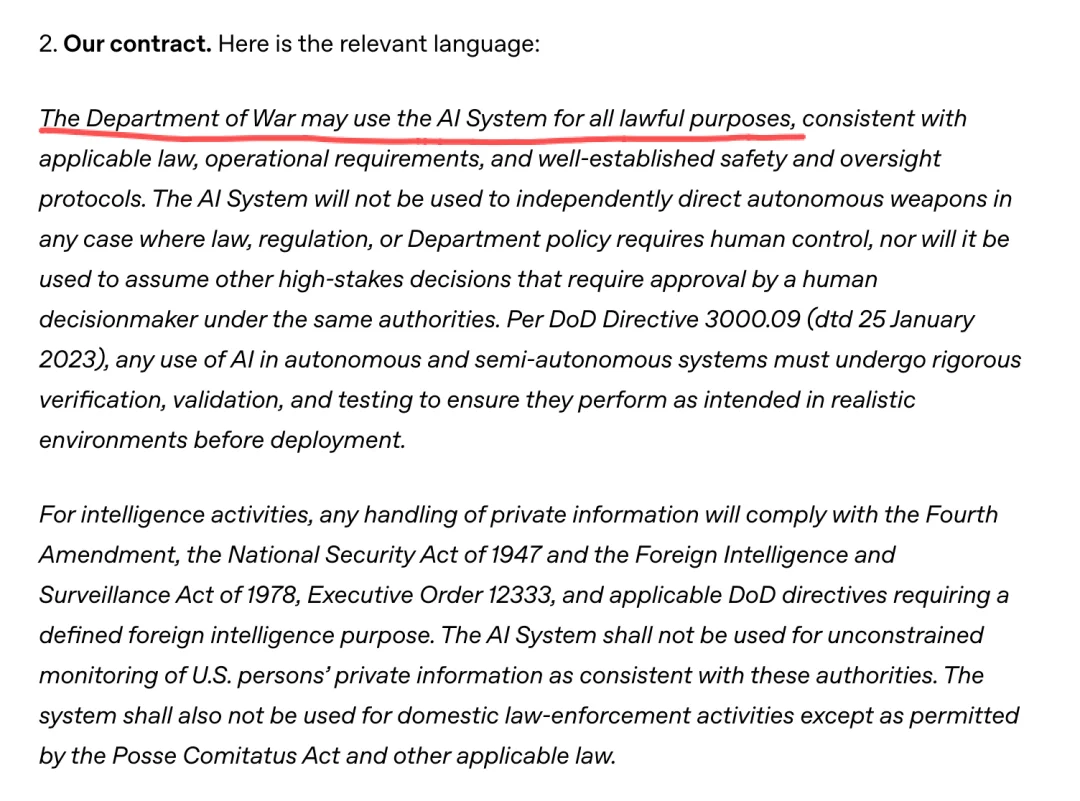

2. Usage Governance

- Authorization limited to “all lawful purposes” — explicitly excluding:

- Mass domestic surveillance;

- Domestic law enforcement (except where expressly permitted by statute);

- Autonomous weapon system engagement without human-in-the-loop approval.

- All intelligence applications must comply with FISA, Executive Order 12333, and NSA oversight protocols.

3. Human Expert Oversight

- Cleared OpenAI deployment engineers and AI alignment researchers embedded end-to-end in DoD workflows.

- Continuous co-development of threat-adaptive safety layers.

FAQ: Addressing Key Concerns

Q1: Why did OpenAI succeed where Anthropic failed?

OpenAI attributes success to enforceable architecture, not weaker principles. Its cloud-native model, combined with full-stack safety ownership and personnel integration, creates verifiable guardrails — unlike policy-only commitments.

Q2: Does this enable autonomous weapons?

❌ No. Edge deployment is prohibited. Cloud latency and human-in-the-loop requirements make real-time lethal autonomy technically infeasible under this framework.

Q3: Will this allow mass surveillance of Americans?

❌ No. The agreement explicitly excludes domestic surveillance outside narrow, court-authorized foreign-intelligence contexts — reinforced by constitutional and statutory constraints.

Q4: What happens if the DoD violates terms?

OpenAI reserves contractual rights to suspend or terminate the agreement immediately. The company states it expects full compliance but maintains legal remedies.

Q5: How does OpenAI respond to Anthropic’s objections?

OpenAI affirms Anthropic’s two original red lines — and adds a third. It notes that Anthropic’s concerns about enforceability are addressed here via architectural control, not just language.

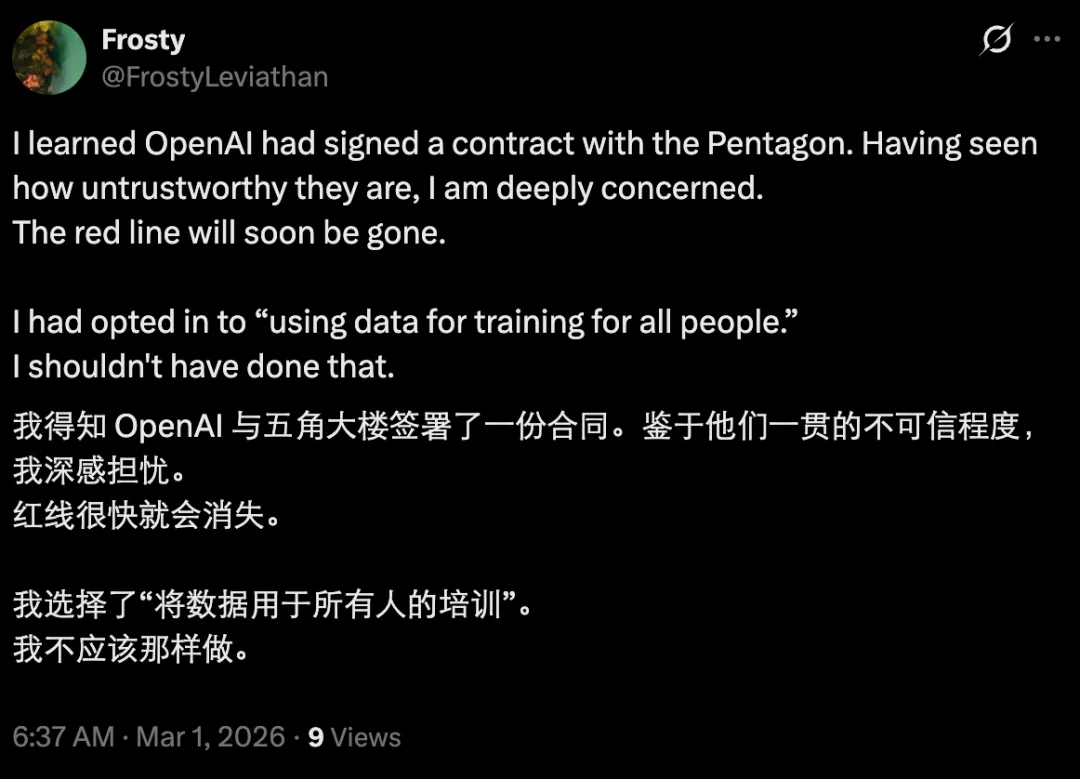

Skepticism Mounts Amid Ambiguous Language

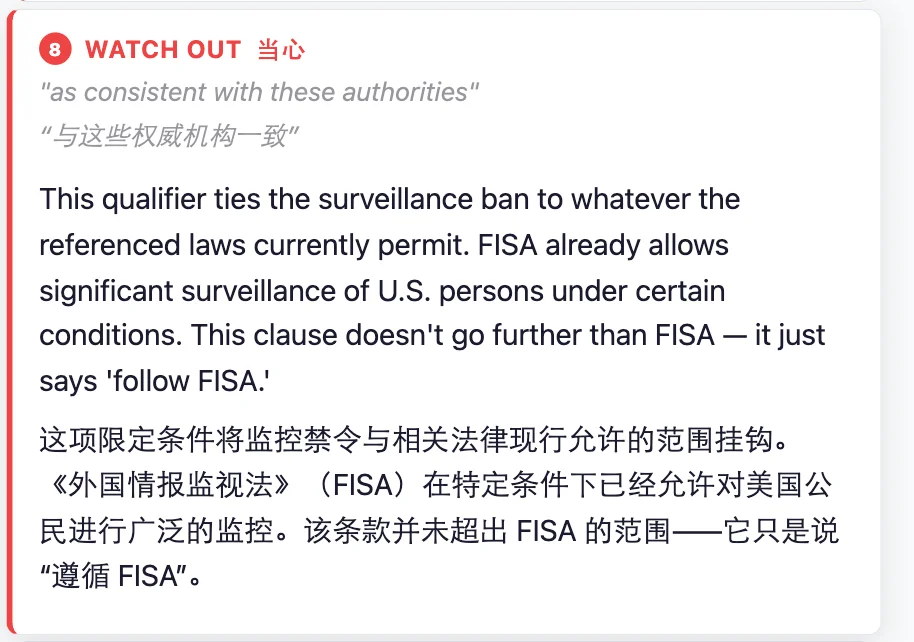

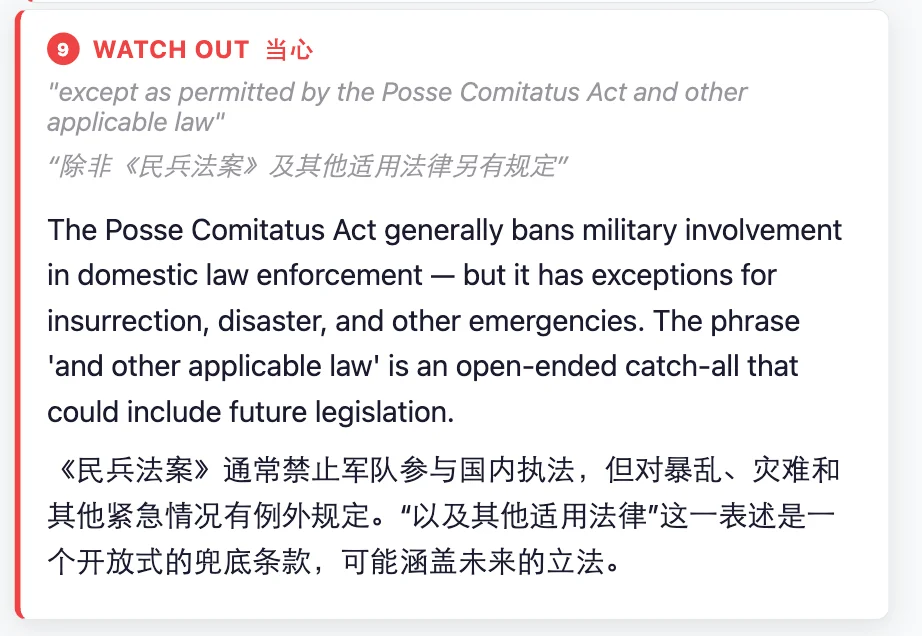

Despite the detailed framework, critics remain unconvinced. Key concerns include:

- Vague phrasing like “all lawful purposes” — subject to reinterpretation amid shifting policy or executive orders;

- Absence of independent third-party audit provisions;

- Reliance on self-monitoring rather than external verification;

- Historical precedent showing how “red lines” erode under mission pressure.

Independent analysts fed the terms into LLMs — highlighting linguistic loopholes that could permit scope creep, especially around “lawful” exceptions and definitions of “autonomy.”

Multiple community threads conclude: “Red lines are only as durable as the institutions enforcing them — and history shows they rarely hold.”

Looking Ahead

OpenAI has invited all AI labs — including Anthropic — to adopt identical terms and urged the DoD to resolve its impasse with rival developers. Whether this offer bridges divides or deepens fragmentation remains uncertain.

As one observer noted: “The real test isn’t the press release — it’s what happens when the first urgent operational request arrives at 3 a.m.”

Source: OpenAI Official Blog Post

Originally published by Machine Heart