Nvidia Unveils Vera Rubin GPU and NemoClaw AI Agent Platform

Jensen Huang takes the stage at GTC 2026 in San Jose — a landmark event redefining AI infrastructure and agentic computing.

🔥 A New Era of AI Supercomputing

At GTC 2026, Jensen Huang—dubbed “The King of Tokens”—unveiled Vera Rubin, Nvidia’s next-generation AI supercomputing platform: a groundbreaking seven-chip integrated system, combining:

- Rubin GPU

- Vera CPU (second-gen Arm v9.2, 88 Olympus cores, 1.5 TB LPDDR5X)

- NVLink 6 Switch

- ConnectX-9 SuperNIC

- BlueField-4 DPU

- Spectrum-6 Ethernet Switch

- Groq 3 LPU — newly integrated for ultra-low-latency token generation

💡 This is the first time Groq’s LPU architecture has been natively embedded into an Nvidia datacenter platform — enabling deterministic, compiler-scheduled inference pipelines.

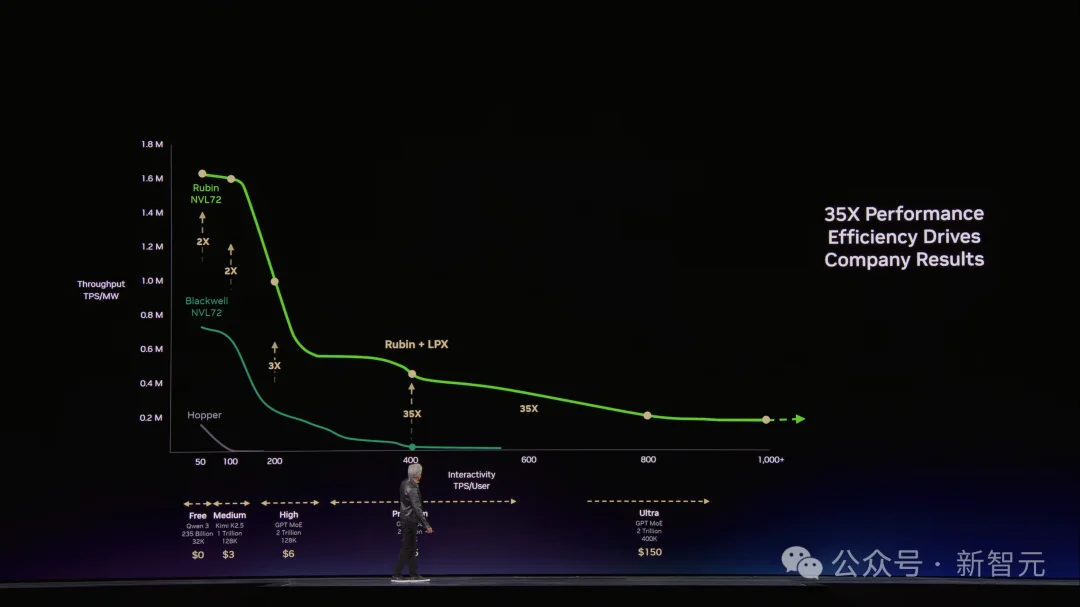

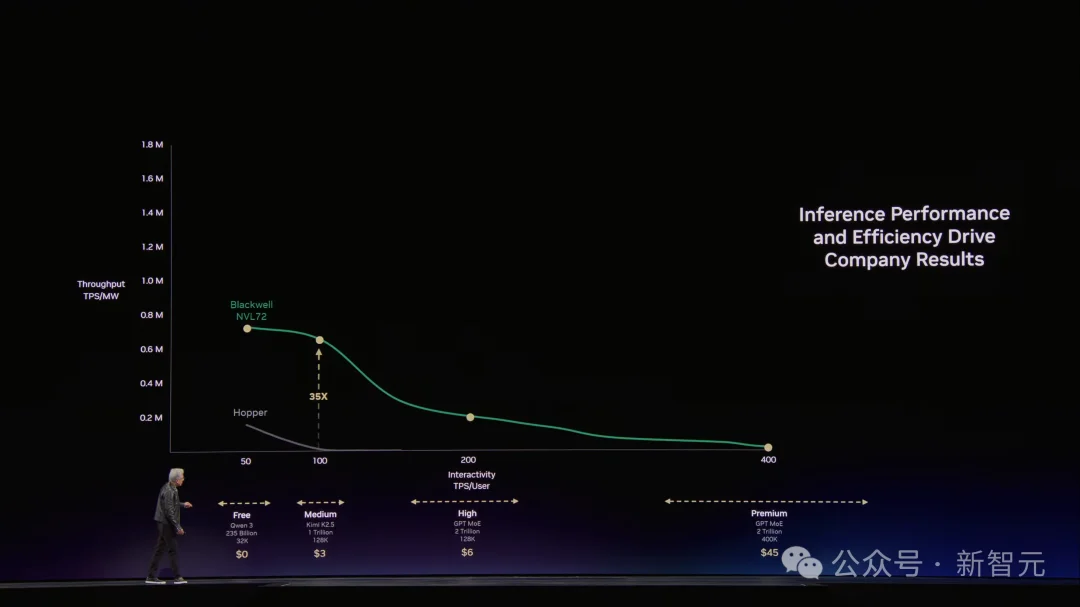

⚡ Performance Leap: 35× Inference Speedup

By intelligently splitting inference workloads:

– Prefill & attention → handled by Rubin GPU (massive KV cache + FP4 compute)

– Decoding & token generation → offloaded to Groq 3 LPU (150 TB/s SRAM bandwidth, 7× faster than Rubin HBM4)

Result: 35× higher throughput in the premium-tier “Super Tier” — where high-value tokens fetch up to $150 per million.

The “CEO Dashboard”: Token throughput (x-axis) vs. energy efficiency (y-axis). Vera Rubin dominates the top-right quadrant — highest speed and highest tokens-per-watt.

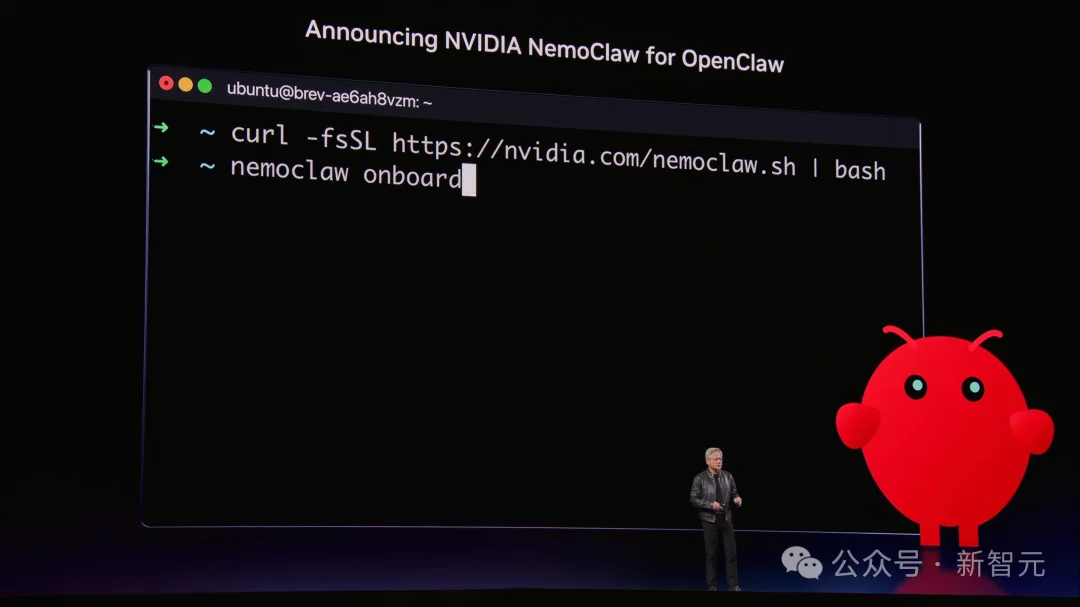

🦞 NemoClaw: Nvidia’s Secure, Hardware-Bound OpenClaw Distribution

While OpenClaw emerges as the de facto open standard for autonomous AI agents (“the operating system for personal AI”), Nvidia launched NemoClaw — its hardened, enterprise-ready implementation:

✅ OpenShell Runtime: Built-in security sandbox + policy engine for enterprise governance

✅ Nemotron Local Brain: Pre-integrated open-source models for offline, privacy-preserving execution

✅ Hardware Binding: Certified across GeForce RTX PCs, RTX PRO workstations, DGX Spark, and DGX Station — ensuring 7×24 agent uptime

🧠 “Mac and Windows are OSes for PCs. OpenClaw is the OS for personal AI.” — Jensen Huang

🌐 From Digital to Physical AI

Beyond software agents, Nvidia accelerated Physical AI:

– Alpamayo 1.5 — real-time open-source reasoning model powering Mercedes-Benz CLA autonomous driving demos

– Cosmos World Model — generates synthetic training data for rare edge cases (e.g., blizzard navigation, construction zone rerouting)

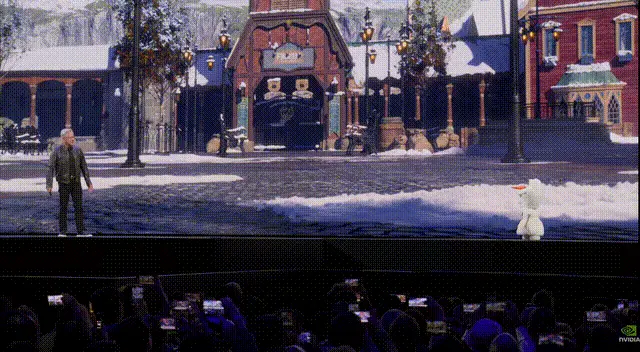

– Olaf the Robot Snowman — Disney’s walking, talking, physics-aware agent powered by Jetson + Omniverse + Newton Engine

🚀 Vera Rubin Space-1 Module — AI compute in orbit, delivering 25× more inference performance than H100 in space.

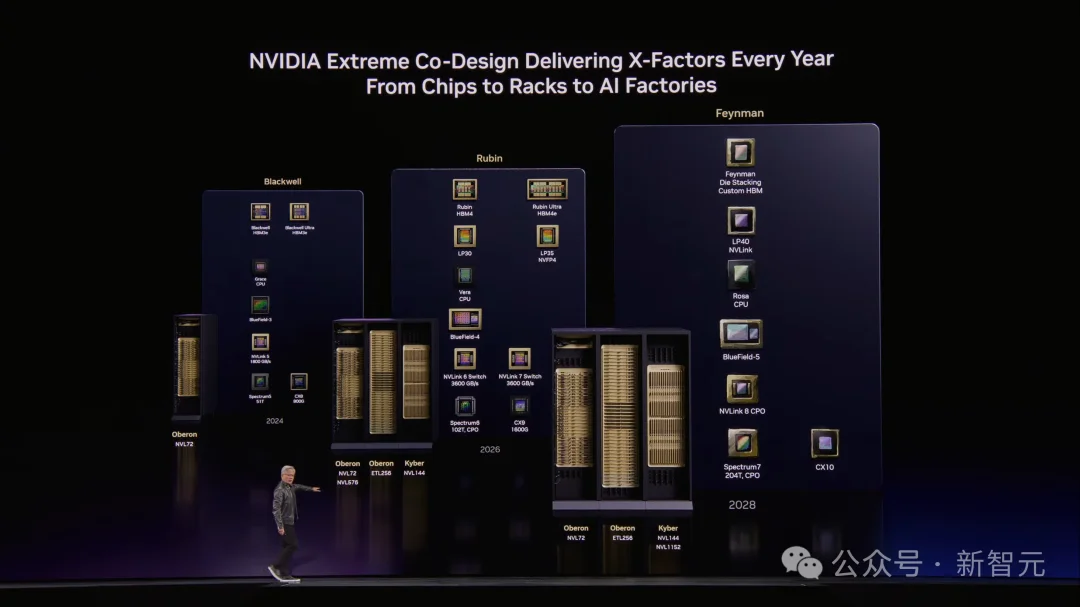

📈 The $1T Roadmap: Blackwell → Rubin → Feynman

Nvidia confirmed its aggressive cadence:

– Blackwell Ultra → Rubin → Rubin Ultra → Feynman (2028)

– Each generation delivers 3–5× inference gains, 2–3× training improvements, and full CPO (Co-Packaged Optics) integration

✅ Conclusion: The AI Factory OS

Nvidia no longer sells chips — it ships full-stack AI factories: from silicon (Rubin), to runtime (Dynamo + OpenShell), to data (Cosmos), to physical embodiment (Thor, Jetson).

As Huang declared: “Every SaaS company will become a GaaS company — Agentic-as-a-Service.”

References

– Nvidia GTC 2026 Keynote

– Article source: New Intelligence Era, by Peach & Zzz