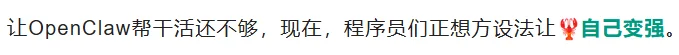

MetaClaw Enables Real-Time Agent Self-Improvement via Conversations

No GPU clusters. No curated datasets. No manual fine-tuning. Just chat — and watch your AI agent evolve in real time.

A Paradigm Shift: Learning from Every Interaction

MetaClaw introduces a groundbreaking online reinforcement learning framework that transforms ordinary user–AI conversations into continuous training signals. Unlike traditional offline RL or supervised fine-tuning, MetaClaw operates transparently in the background, turning every dialogue round into high-fidelity training data — without disrupting user experience.

How It Works

- Intercept & Score: MetaClaw silently intercepts OpenClaw interactions and assigns reward scores per turn using lightweight, adaptive reward modeling.

- Online Policy Optimization: Applies real-time LoRA-based fine-tuning — no full-model retraining required.

- Error-Driven Skill Generation: When an interaction fails, MetaClaw reconstructs the full trajectory, diagnoses root causes, and autogenerates a new skill — instantly stored in the dynamic skill library.

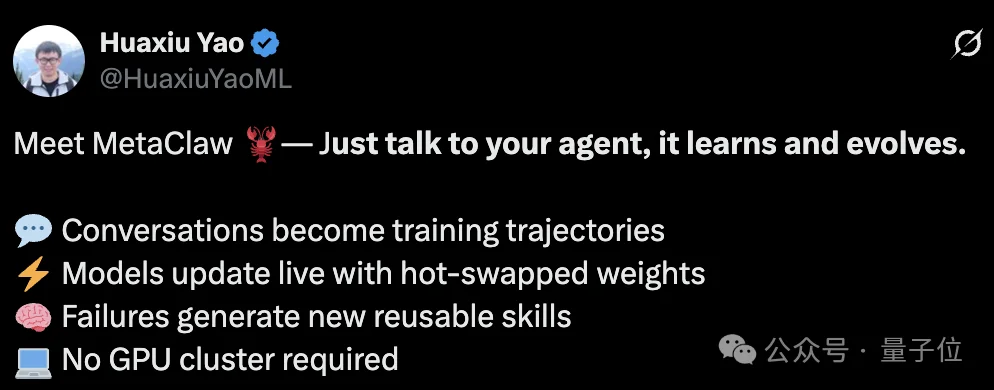

Core Architecture: SkillRL Framework

Built atop Kimi-2.5 (with optional lightweight fallback: Qwen3-4B), MetaClaw’s innovation lies in its dual-phase skill lifecycle:

🔹 Skill Injection

- Dynamically retrieves and injects relevant skills during inference via semantic search over the skill library.

- Zero latency overhead: Skills are applied at prompt-generation time, not post-hoc.

🔹 Skill Evolution

- Converts failure cases into executable skill modules (e.g.,

handle_ambiguous_queries_v2,fallback_to_multistep_reasoning). - Skills are versioned, composable, and automatically prioritized based on context similarity and historical success rate.

Cloud-Native Training: Tinker-Powered Decoupling

MetaClaw eliminates infrastructure friction by fully offloading training to Tinker Cloud:

- ✅ No local GPU cluster needed — only internet connectivity required.

- ✅ Complete separation of inference (client-side) and training (cloud-side).

- ✅ Async architecture: User-facing responses remain sub-500ms while background RL loops run independently.

Flexible Learning Modes

Choose your optimization strategy — or combine both:

| Mode | Mechanism | Use Case |

|---|---|---|

| Implicit RL | Learns from implicit user feedback (e.g., continuation, correction, session length) | Low-overhead, privacy-preserving adaptation |

| Online Policy Distillation | Distills high-quality human/AI feedback (e.g., expert annotations, LLM critiques) into policy updates | High-precision capability lift |

Quick Start: Just 3 Steps

Step 1: Install Dependencies

pip install fastapi uvicorn httpx openai transformers

pip install tinker tinker-cookbook

Step 2: Configure Model Gateway

bash openclaw_model_kimi.sh # Recommended: Kimi-2.5 backend

Step 3: Launch Conversation RL Loop

export TINKER_API_KEY="xxx"

cd /path/to/metaclaw

python examples/run_conversation_rl.py

✅ Then simply converse — MetaClaw handles collection, scoring, training, and hot-swapping model weights automatically.

Optional: Enable Advanced Capabilities

Enable skill injection only:

config = MetaClawConfig(use_skills=True)

Enable full skill evolution (with GPT-5.2 as critic):

config = MetaClawConfig(

use_skills=True,

enable_skill_evolution=True,

azure_openai_deployment="gpt-5.2",

)

export AZURE_OPENAI_API_KEY="xxx"

export AZURE_OPENAI_ENDPOINT="https://your-endpoint.openai.azure.com/"

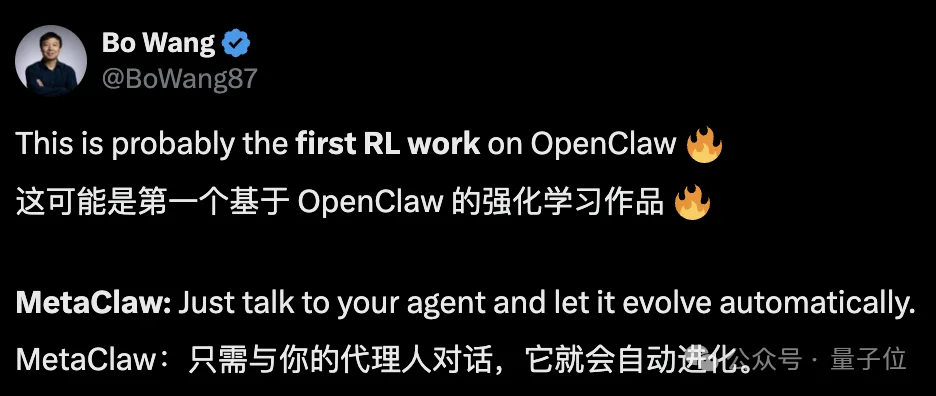

All configuration — model choice, LoRA rank, batch size, loss type, and more — is unified under MetaClawConfig for reproducibility and transparency.

Research Leadership & Open Source

Led by Dr. Huaxiu Yao, Assistant Professor at UNC Chapel Hill Department of Computer Science, former Postdoctoral Fellow at Stanford AI Lab, and alumnus of University of Electronic Science and Technology of China. His work focuses on agentic reasoning, embodied AI, and self-improving systems.

🌐 Official Repository: https://github.com/aiming-lab/MetaClaw

📌 References:

– X Post @BoWang87

– X Post @HuaxiuYaoML

Article originally published by QuantumBit; author: Wen Le.